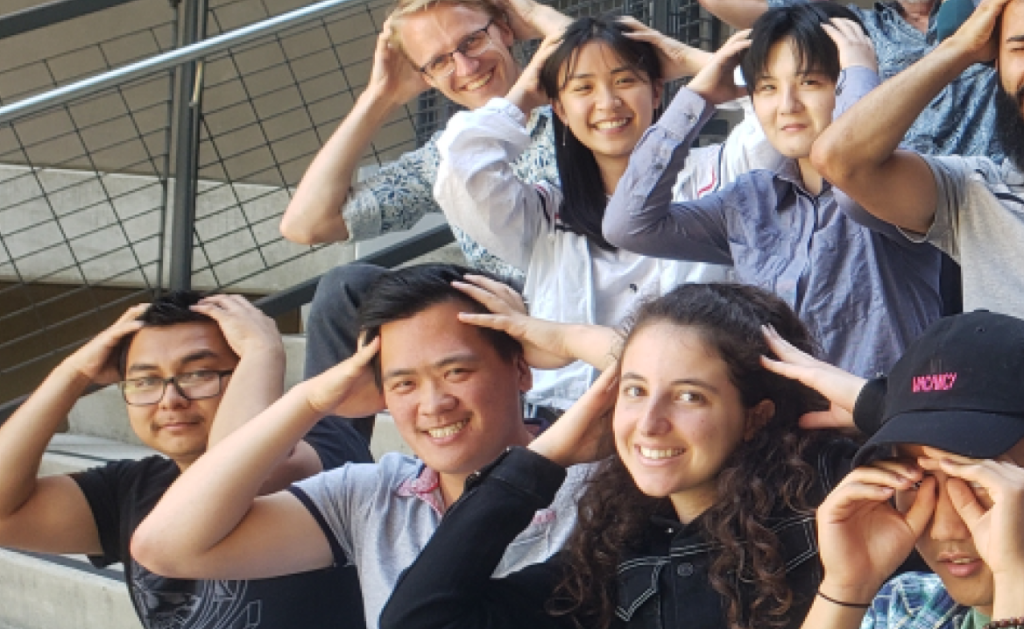

Embodied Coding

How can augmented and virtual reality support human-centered, collaborative computing?

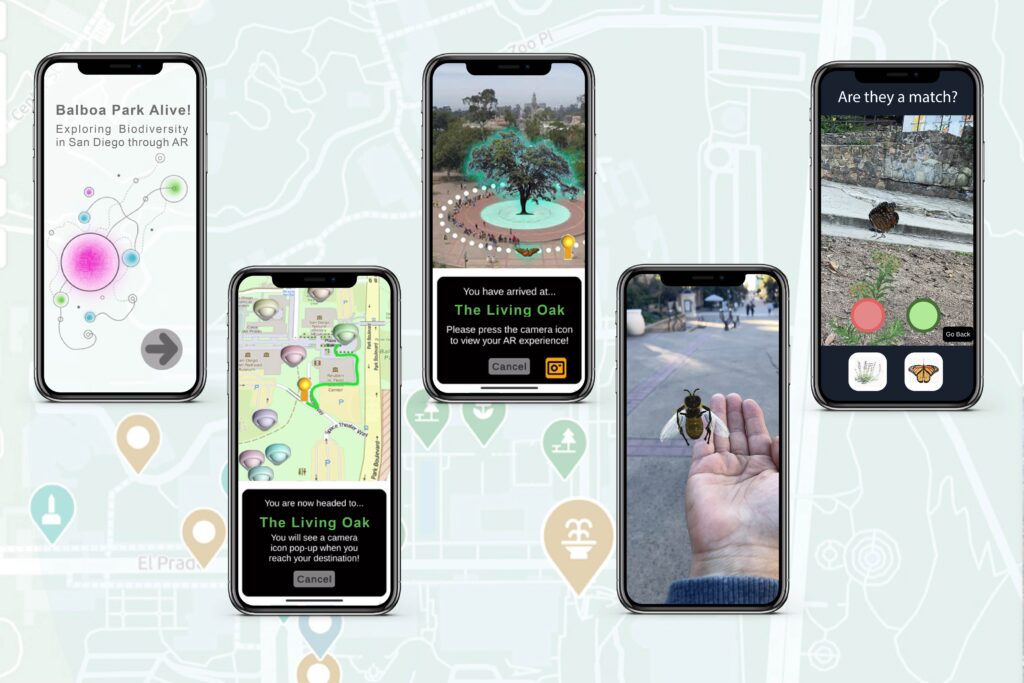

Balboa Park Alive!

This project aims to foster care for our region’s biodiversity by promoting ecological literacy with AR.

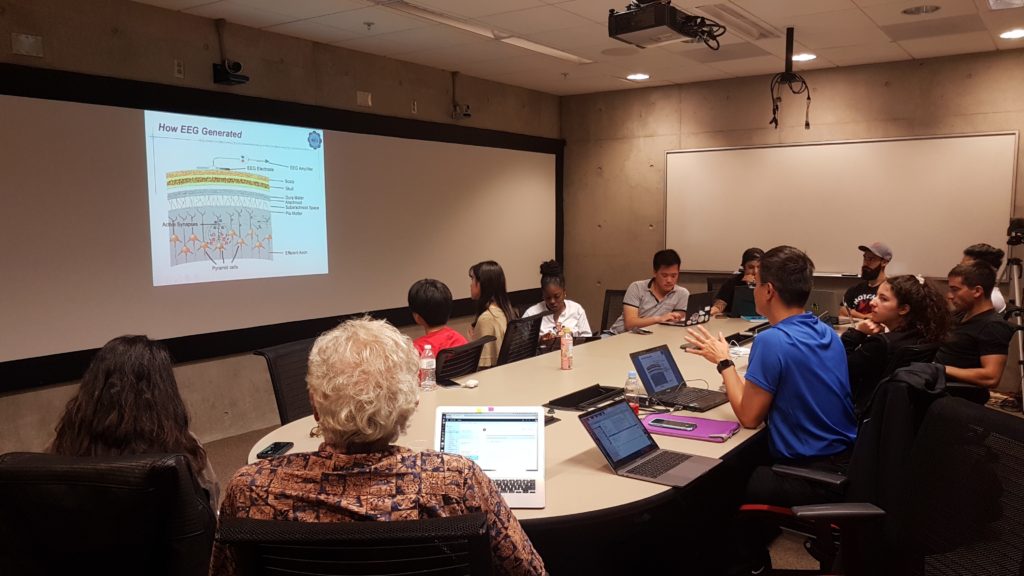

Visual Search in VR

We model ecologically valid visual search processes in 3D space using virtual reality.